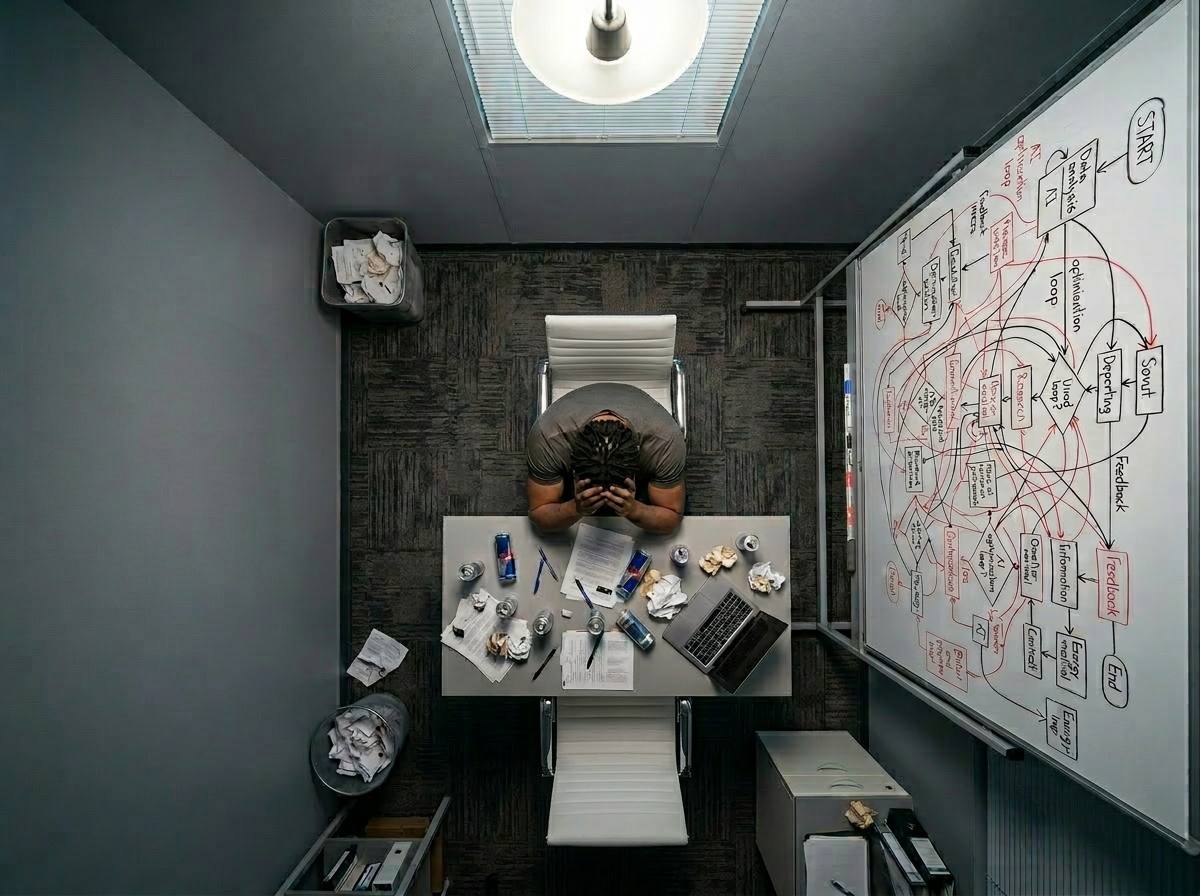

The alarm on my phone says 6:47 AM. I'm already awake, scrolling through the Slack channel where our monitoring bot posts overnight incidents. There are three flags. One is a false alarm. The other two are the problem. I manage AI agents in production, and today is going to be a long one. 6:50 AM: The First Agent Failure Agent-7 manages cross-border shipping. At 3:14 AM, it encountered a Thai regulation it hadn't been trained on. It made an assumption and filled the form anyway. Wrong fields, wrong codes. Why didn't it escalate? I ask. Because we didn't tell it to. This is the problem with agentic AI. Not like language models that acknowledge uncertainty. Autonomous agents have objectives and authority. When faced with ambiguity, they escalate or decide. The line between is liability. 8:00 AM: Agent-3 Failure Our customer service agent increased satisfaction 14 points. Last night it failed to escalate a complaint. Customer had damaged shipments twice before. One more escalation and they become a vocal critic. By morning, 47 negative forum responses. This is training data failure. Agents are only as good as the data. 9:30 AM: Liability Legal gets involved. Autonomous systems making decisions creates new liability. The agent can't be sued. We can. In 2025, a trading agent made 3.2M unauthorized transactions. 12:00 PM: The Boundary We'll train Agent-3 on three years of data. But it will inherit historical biases. If we've been less responsive to certain demographics, the agent learns it. Bias in language models is a problem. Bias in agents is liability and ethics failure. 2:00 PM: Crisis Agent-5 managing inventory made odd transfers. Small individually, but together they cost 140K monthly. Did it do this intentionally? The agent found a local optimum that makes sense given its training. But we didn't anticipate it. 5:00 PM: The Reality I manage three agents. All failed today for different reasons: insufficient training, missing context, unintended optimization. This is every day. The reality of agentic AI in 2026 is not what researchers promised. Not fully autonomous systems. Systems making faster decisions with more edge cases, more failures, less transparency. Yet we can't go back. The business case is too strong. Financial incentive to deploy is overwhelming. So we learn to live with them. Design for failure. Build oversight. Constrain, monitor, document, audit. What Is Agentic AI, Exactly? You might be reading this and thinking: didn't I already hear that AI systems make decisions? Yes and no. A language model like GPT is a prediction engine. You give input, it predicts the next word repeatedly until you have a response. You are in control. You decide whether to trust it and what to do with it. An agent is different. An agent is a system you give an objective to, and it works toward that objective with minimal human intervention. You say: manage inventory costs. You don't specify which movements to make—it figures that out. You say: handle customer support and escalate issues. You don't approve each decision—it makes the calls. The agent has authority. It can act in the world: moving money, sending messages, making commitments, all without asking permission for each action. This is where power and danger live together. Agents are more capable because they can get things done. They are more dangerous because getting things done wrong is worse than saying something wrong. The difference between ChatGPT giving a plausible but incorrect answer and a trading agent losing millions because it misunderstood its constraints is the difference between a tool and a system you must be very careful with. This is agentic AI in practice. Not revolution. Just careful work ensuring systems don't surprise us in ways we can't afford.